Imagine you are working in an electrical workshop and measuring voltage using a voltmeter. The meter shows 220V, but the actual voltage is 235V. This small error may look harmless, but in electrical systems it can lead to incorrect calculations, equipment damage, or safety risks.

This is where Calibration of Instruments becomes extremely important.

In electrical engineering, measuring instruments must provide accurate and reliable readings. If instruments are not calibrated regularly, their readings may drift over time due to aging components, temperature changes, mechanical wear, or environmental conditions.

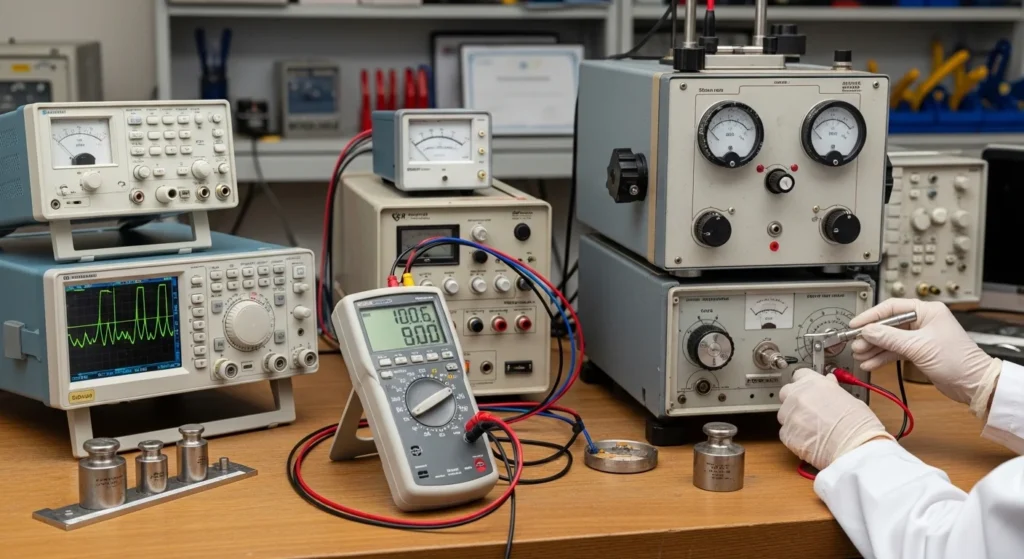

Calibration ensures that measuring devices such as voltmeters, ammeters, multimeters, oscilloscopes, and power analyzers provide correct values according to standard references.

In this article, you will learn:

- What calibration of instruments is

- Calibration working principle

- Types of calibration methods

- Main components used in calibration

- Calibration advantages and disadvantages

- Practical applications in electrical systems

- Troubleshooting calibration problems

- Future trends in calibration technology

This guide is written in simple and clear language, making it ideal for electrical students, technicians, engineers, and beginners.

2. What is Calibration of Instruments?

Calibration of Instruments is the process of comparing the measurement of an instrument with a known standard reference to check and adjust its accuracy.

In simple words:

Calibration ensures that an instrument shows the correct measurement value.

Simple Explanation

Every measuring instrument may slowly lose accuracy over time. Calibration identifies this error and corrects it.

During calibration:

A standard reference instrument with very high accuracy is used.

The instrument under test is compared with the standard.

Any difference in readings is recorded.

Adjustments are made if necessary.

Practical Example

Suppose a digital voltmeter measures 100V, but a certified standard meter shows 102V.

This means the voltmeter has an error of 2V. Calibration helps correct this difference.

3. Calibration of Instruments Working Principle

The calibration working principle is based on comparison with a standard measurement.

The main goal is to determine how much an instrument deviates from the correct value.

Step-by-Step Calibration Process

Select a Standard Instrument

- A highly accurate reference instrument is chosen.

Apply Known Input

- A known voltage, current, or signal is applied.

Measure the Output

- The instrument under test measures the signal.

Compare Readings

- The measured value is compared with the standard.

Calculate Error

- Difference between actual value and measured value.

Adjust the Instrument

- If possible, adjustments are made to improve accuracy.

Simple Analogy

Think of calibration like setting the correct time on a watch.

If your watch shows 10:05, but the real time is 10:00, you adjust it to match the correct time.

Similarly, calibration adjusts instruments to match true measurement standards.

4. Types of Calibration

Calibration methods vary depending on the type of instrument and industry.

Primary Calibration

Primary calibration uses national or international standards.

These standards are extremely accurate and maintained by certified laboratories.

Examples:

- National measurement laboratories

- Government standard labs

Primary calibration is rarely done in normal industries.

Secondary Calibration

Secondary calibration compares instruments with secondary standard devices.

These standards are calibrated using primary standards.

Industries commonly use this method.

Examples:

- Industrial laboratories

- Electrical testing labs

Automatic Calibration

Modern instruments perform self-calibration automatically.

The system adjusts internal parameters to maintain accuracy.

Examples:

- Digital multimeters

- Advanced oscilloscopes

- Smart measurement devices

Manual Calibration

Manual calibration is performed by technicians using external equipment.

Steps include:

- Applying standard signals

- Adjusting instrument settings

- Recording measurement errors

This method is common in workshops and laboratories.

5. Main Components Used in Calibration

Calibration systems include several important components.

Standard Reference Instrument

This is the most accurate instrument used for comparison.

Examples:

- Precision voltage source

- Standard current source

- Calibration reference meter

Device Under Test (DUT)

The instrument being calibrated is called the Device Under Test.

Examples include:

- Voltmeter

- Ammeter

- Multimeter

- Oscilloscope

Signal Generator

A signal generator produces known electrical signals for testing.

Examples:

- Voltage signals

- Frequency signals

- Current signals

Measurement System

A monitoring system records measurement results and errors.

Modern calibration systems use computer-based measurement software.

Adjustment Mechanism

Some instruments include internal adjustment controls.

These allow technicians to correct measurement errors.

6. Advantages of Calibration of Instruments

Calibration offers many practical benefits in electrical systems.

• Ensures high measurement accuracy

• Improves equipment reliability

• Reduces risk of system failure

• Maintains quality standards in industry

• Helps detect instrument errors early

• Improves safety in electrical systems

• Maintains compliance with international standards

For engineers and technicians, calibration is essential for trustworthy measurements.

7. Disadvantages / Limitations

Although calibration is important, it also has some limitations.

• Requires special equipment

• Can be time-consuming

• Needs trained technicians

• Calibration equipment may be expensive

• Instruments may need to be taken offline

Despite these limitations, calibration is necessary for maintaining measurement accuracy.

8. Calibration Applications

Calibration is used in almost every electrical and electronic field.

Industrial Applications

Factories rely on calibrated instruments to maintain production quality.

Examples:

- Power plant monitoring

- Industrial automation

- Electrical testing laboratories

Electrical Maintenance

Technicians calibrate measuring tools regularly.

Examples:

- Multimeters

- Clamp meters

- Oscilloscopes

Power Systems

Calibration ensures accurate monitoring of electrical parameters.

Examples:

- Voltage measurement

- Current monitoring

- Frequency measurement

Research and Laboratories

Scientific experiments require extremely accurate measurements.

Calibration helps ensure reliable data collection.

9. Comparison: Calibration vs Validation

| Feature | Calibration | Validation |

| Purpose | Adjust instrument accuracy | Verify system performance |

| Method | Compare with standard | Test system functionality |

| Adjustment | Adjustment possible | No adjustment required |

| Focus | Measurement accuracy | Process reliability |

Understanding the difference between calibration and validation helps engineers maintain both measurement accuracy and system performance.

10. Selection Guide: How to Choose Calibration Equipment

Choosing the right calibration equipment is important.

Accuracy Level

The calibration device should be more accurate than the instrument being tested.

Measurement Range

Ensure the calibration instrument supports the required range:

- Voltage

- Current

- Frequency

Environmental Conditions

Calibration equipment should work properly in laboratory conditions such as:

- Stable temperature

- Low humidity

- Low vibration

Ease of Use

Modern calibration devices should have:

- Digital display

- Automatic measurement

- Data recording

These features help technicians work more efficiently.

11. Common Problems & Solutions

Why do instruments lose accuracy?

Instruments lose accuracy due to:

- Component aging

- Temperature changes

- Mechanical wear

- Electrical interference

Regular calibration solves this issue.

How often should instruments be calibrated?

Most industries recommend calibration every:

- 6 months

- 12 months

High-precision equipment may require more frequent calibration.

What happens if instruments are not calibrated?

If instruments are not calibrated:

- Measurements become inaccurate

- Equipment may malfunction

- Safety risks increase

- Product quality decreases

Can digital instruments calibrate themselves?

Some modern instruments include automatic self-calibration features, but periodic professional calibration is still recommended.

12. Future Trends in Calibration Technology

Calibration technology continues to evolve with modern electrical systems.

Smart Calibration Systems

Modern systems use AI-assisted calibration and automatic adjustment.

Remote Calibration

IoT technology allows engineers to perform remote calibration and monitoring.

Digital Calibration Certificates

Many industries are replacing paper certificates with digital calibration records.

Automated Calibration Labs

Fully automated laboratories are being developed for faster and more accurate calibration.

These innovations will improve measurement accuracy and efficiency in the future.

13. Conclusion

Calibration of instruments is one of the most important practices in electrical engineering. Accurate measurements are essential for safe operation, system reliability, and correct analysis of electrical parameters.

Over time, every measuring device can develop small errors due to environmental factors, aging components, and mechanical wear. Calibration helps detect these errors by comparing instruments with certified standards. If necessary, adjustments are made to restore measurement accuracy.

Engineers, technicians, and students must understand the calibration working principle, calibration applications, and calibration advantages and disadvantages. Regular calibration ensures that electrical measurements remain reliable and trustworthy.

In modern industries such as power generation, manufacturing, automation, and research laboratories, calibration plays a critical role in maintaining quality and safety standards.

For anyone working with electrical instruments, calibration is not just a maintenance task—it is a fundamental requirement for accurate engineering practice and professional reliability.